Bitcoin, a cryptocurrency and payment system that was invented by a person or a group of people by the name Satoshi Nakamoto, whose identity in real world is still unknown.

It is the first cryptocurrency that solves double-spending problem and thus introduces blockchain, a distributed ledger managed by a P2P network that removes the reliance on a single authority for trust and establishes a trustless payment system that depends on cryptographic proof using proof-of-work (PoW).

A New Money or Wealth

Bitcoin is a new kind of money or wealth; created from thin air or rather we should say, by spending electricity to run specialized computers, doing a lot of computations.

Miners

No central authority is issuing Bitcoin. It is the miners who maintain the blockchain and the Bitcoin system in general. And they are the ones who create/mine all the bitcoins and own them upon creation. Others buy from them. Yes, all the bitcoins out there were first owned by some miner when they were first created or mined.

However, anybody can buy computers and become a miner by joining the network. It is as simple as installing certain software and running it. However, mining bitcoins is like winning a lottery, depends on your computing power. If you (or your mining pool) have the most powerful computer and a bit of luck, you will possibly be able to mine. However, whether that will be economically viable or not is a different matter. How miners generate bitcoins will be clearer later.

How many miners are presently working on the Bitcoin blockchain?

Limited Supply

Bitcoin protocol stipulates that there would be a maximum of 21 million Bitcoins (BTC) created by 2140, at a diminishing rate. Every 4 years the number of bitcoins mined will be halved. Thus bitcoin production will be slowed down and at some point it will be stopped. We will do some math on it later.

This is the limited supply of bitcoin that convinces people to treat it as some kind of gold whose value, they think, will gradually appreciate.

Well, this limited supply is an artificial construct. We will have a short discussion regarding this at the end.

Apart from the limited supply there are few more things that make Bitcoin attractive to people.

Exchanges

There are exchanges that convert bitcoin to fiat currencies (real money) and vice versa.

Privacy

Within Bitcoin network, you are represented by a number – your public key, to be precise. When you are sending some coins to somebody else – everybody knows it. After all, the ledger is public, everybody can see everything. But they don’t know who you are in the real world. It is different from using our usual debit card/credit card payments where the bank and the merchant know who the buyer is. However, it is not entirely untraceable; it is just hard. But it is not very obvious as buying something using a credit card.

Borderless

Bitcoin payment system is based on a P2P network running on internet. One can transfer any number of bitcoins from anywhere to anywhere else without bank accounts, regulatory restrictions etc. That offers some kind of freedom and flexibility.

Nonreversible Transaction

As we will slowly understand that once a transaction gets into a block and that block is accepted in the blockchain, it becomes permanent and virtually impossible to reverse. It gives confidence to people who receive money that they will not be cheated later.

This is important. As there is no reversal, the merchant does not need to trust you. That in turns allows a system where the two parties can remain anonymous.

Transparency

As mentioned, all the transactions are open in the public since the beginning and no central authority is managing them. It is the miners and nodes (what is a node, we will see later) who are managing them. It will be clearer that it is in their best interest to remain honest and manage it properly. It sounds like a transparent system.

Wallet

Suppose I want to have some bitcoins. I will then need to have a wallet. There are different wallet providers like Mt. Gox exchange. A wallet can manage multiple cryptocurrencies. Note that, Bitcoin is the first cryptocurrency but there are 1000+ out there.

A wallet can have multiple addresses. Each address is essentially a private-public key pair. More precisely, it is actually the public key that is called an address. However, with each public key there exists an associated private key that secretly stays with the owner, behind the scene.

While doing transactions, we use these keys. A public key is used to receive bitcoins and the corresponding private key is used to spend them.

By using multiple addresses, I am trying to hide my aggregate balance that I own in the blockchain. This is because everyone can see how much bitcoins an address (like an account in real world) owns. But they don’t quite completely know as to what all addresses belong to a certain person behind the curtain. Well, if you are spending some coins from multiple addresses at one go then one would know that all these addresses belong to you.

Public-key Cryptography

As we have mentioned, public-key cryptography plays an important role in Bitcoin/blockchain. Especially, digital signature is an integral part of it. Even if we forget the P2P network that is built at the top of a secure channel.

Digital signature employs asymmetric cryptography – usually, based on RSA or Elliptic-curve Cryptography. Bitcoin uses Elliptic Curve Digital Signature Algorithm (ECDSA) based on elliptic-curve cryptography, possibly because for the same level of security, it requires much smaller key than Digital Signature Algorithm (DSA) based on RSA. That saves bandwidth on the blockchain P2P network.

Public-key cryptography provides two keys – a private and a public. A private key is never to be exposed to public and remains a secret with the owner. On the other hand, public key is known to all. When the owner wants to send some information, she will take a hash, say, SHA-256 of the information, called digest, “decrypt” it with her private key and send that signature along with the original information.

The recipient would take the same hash, SHA-256 in this case, of the information and compare it to the signature after “encrypting” it. If the two match then the recipient knows for sure that the information came from the person who claims to have sent it and that it has not been altered on the way.

Hash

We talked about hash just now. Well, hash is once again another integral part of Bitcoin/blockchain. A cryptographic hash function employs a mathematical transformation of an arbitrary length of information to a small fixed size bit string. A slight change in input would result in a drastically different output. It may always not happen – two inputs might result in the same hash value – but the chance is extremely low.

Hash is a one-way function in the sense that output can be computed easily from an input but input cannot be computed back from output.

Bitcoin largely uses SHA-256. SHA stands for Secure Hash Algorithm. It is developed by NSA.

Bitcoin Denominations

Suppose, I have a private key 7 that nobody except me knows about. And say, the corresponding public key is 3. So 3 is my address that everybody knows. Suppose, at two occasions, two persons sent me two coins, one worth 3 BTC and another worth 1.01635 BTC. Both were sent to my address 3, my only address as of now. Assume that everybody out there knows that I own that two coins.

Now that I have 4.01635 BTC, for some reason, I want to pay 3.01635 BTC to Bob. Since none of the two coins can be used alone, I must use both the coins together. Suppose the transaction fee is 0.2 BTC, something we will know later. Then, I will get back a change of 0.8 BTC.

That’s how it works in real world, right? I want to buy $6 stuffs, I give a $5 note and a $2 note, and I get back a $1 note. The $1 note stays in my wallet till I spend it.

But it is different from a bank balance, where my total money stays without any denominations. I can spend any amount, within that balance. The remaining balance stays back without changing the number of notes/coins.

On the other hand, my Bitcoin wallet resembles more like a real world scenario where the number of bitcoins can vary, depending on how I am spending and what kind of changes/payments I am receiving.

But there is a difference. When somebody sent me that 1.01635 BTC, it became a single piece of coin worth 1.01635 BTC. When I need to use that, I have to use that as a whole. When I get back that 0.8 BTC as change, that change, once again becomes a single piece of coin worth 0.8 BTC.

So in Bitcoin world there is no fixed number of denominations. Whatever amount I get in a transaction, it becomes a single coin with its value.

However, in Bitcoin two units are generally used. BTC is the equivalent of USD, SGD etc. and Satoshi is the equivalent of cent, paisa etc. Satoshi is named after the inventor. As mentioned earlier, there will be a maximum of 21 million BTC. I BTC = 100 000 000 (one hundred million) Satoshi. Alternatively, 1 Satoshi = 0.000 000 01 BTC. The lowest denominated coin that has been transacted in blockchain so far was 1 Satoshi.

Bitcoin Transaction

Continuing with the previous example, I want to pay 3.01635 BTC to Bob. I have two coins – one worth 3 BTC and another 1.01635 BTC. These two coins of mine will go as inputs into the transaction. There will be two outputs: one to Bob, 3.01635 BTC and the second to myself, 0.8 BTC – the change. The rest, 0.2 BTC, will be used as transaction fee.

As mentioned earlier, everybody knows that I own that two coins. At two separate occasions, those two coins came to my address 7. They came to my address means, in each of those occasions, my public key 7 was mentioned in a transaction as output. Hence, these two coins are the output of those two transactions. And as of now, those two transactions have not been spent. Hence, they are unspent transactions. This can be verified as all records are public. That is why it is still valid to use those two transactions as inputs, to a new transaction.

Input

To spend these two coins, I have specified the two transactions as input into a new transaction. How exactly I do that? I get the hash for each of the two transactions and list them in the new transaction. Anybody can look at a hash and figure out which transaction it is referencing to.

The real owner is spending

I then create a digital signature by combining that hash with the new owner’s address (Bob’s public key, say 9 that I know). Why do I do that? Well, since I have digitally signed this with my private key, anybody can verify that it is none other than the private key of the corresponding public key 3 (that is 7 and owned by me), who is saying that the two transactions be included as inputs, meaning the coins be spent.

To be more specific, there are two things happening here: 1) everybody knows which address (address 7) that two coins belong to, and 2) everybody can verify the digital signature using my public key (that is 3). Nobody else’s digital signature could have been verified using my public key, right? So it is verified that the rightful owner of the coins is now instructing to spend it.

Well, all is good. It is my coins that I am spending. And I also included Bob’s address, who is the recipient, in the signature.

Output

In addition to the input section, I also have an output section, where I have listed two addresses: one is Bob’s, who is specified to be receiving 3.01635 BTC and one is my own address – it can 7 or a new address associated with a new private key of mine that I have already generated (note that I am using multiple addresses). The transaction fee 0.2 BTC will go to a miner that we will explain later.

Same Money, Spending More than Once

If we pause for a moment and think, we will realize that all is not well. It is all fine that I am paying my valid unspent coins to Bob. But what if I also create another transaction, just like the one above and instead of Bob, this time, I pay to Alice. Wait, so I am paying the same coins to two persons? Yes, and it is perfectly fine as no central authority is checking this. So far what we have discussed, the only thing we can do is to confirm the owner and that at this moment the coin is still unspent. But it cannot stop one to spend the same coins twice at the same moment.

This problem is known as the double-spend problem.

Need a Permanent, Irreversible History

Suppose, if I had the money in my bank then I would not be dealing with Bob or Alice directly. Rather, I would ask my bank to pay the same to both of them. But bank would process that sequentially, even had I logged in from two machines and issued the two instructions at the same time. Only after paying Bob, bank would proceed to the next transaction. By that time, it would already know my balance was not sufficient to pay 3.01635 BTC to Alice. Or I didn’t own those coins any more.

In our case, Alice must know that the two coins given to her have not been spent earlier. For that to happen, Alice must know all previous transactions. Since Bitcoin did not want a single trusted party, like a bank, all these transactions must be publicly announced. And all these publicly announced transactions somehow must create a single version of the history. That means, once the coins are spent to pay Bob, this transaction must go inside that history. And once that is done, attempt to pay the same coins to Alice again can be found to be invalid, by looking at that history.

Blockchain

Bitcoin came up with a solution for the double-spending problem using a timestamp server combined with PoW. This is called blockchain. It serializes transactions by putting them in a permanent, irreversible blockchain by taking majority votes from the nodes.

At this point, it can be stressed that even though blockchain was invented for Bitcoin by the inventor of Bitcoin, blockchain is an independent technology on its own that can be used for many other applications. Bitcoin needs blockchain, not the other way around.

Block

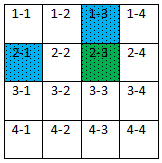

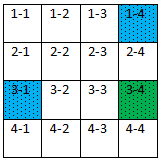

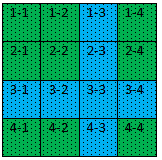

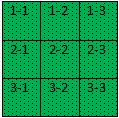

So far we were discussing about transactions. Now we will talk about block, where a number of valid transactions are packed together. Such a block would also include the hash of its previous block and then it would be added to the existing chain of blocks.

Thus a blockchain is a chain of blocks. Keeping the hash of the previous block inside a block makes sure that no arbitrary block fits in the blockchain.

Suppose, at this moment we have 500,000+ blocks in the Bitcoin blockchain. If we change a little history in say, 10,234th block then 10,235th block would become invalid. After all, 10,235th block takes into it the hash of 10,234th block. As 10,235th block becomes invalid, the next one will also become invalid and so on, all the way till the most recently created one.

We can think this relation as a parent-child genetic relation. A block can be thought as a child of its previous block where some genes from its parent (previous block) are propagated to it. Now if the parent’s genes change, child’s genes will no longer match to its parent. Now child’s genes also have to be changed to fit its parent’s ones. But we are not talking about only two generations. We have to change all the descendants of the altered parent, till the last generation, to keep the lineage valid.

Nodes

Bitcoin implements blockchain using a P2P network. Blockchain, as mentioned, is the single version of history. It is essentially a ledger that is maintained by the participating computers in the system. A node is such a participating computer that maintains a ledger.

We already saw what a miner is. To repeat, miners are a subset of nodes who actually creates blocks. As we have seen, miners are the ones who create the block and earn/mine bitcoins in that process.

Non-miner nodes are plain volunteers. Why does a node want to be a volunteer? Well, a node can be maintained by a merchant, who is accepting bitcoins to sell his/her products. Well, there were 100, 000 merchants as of Feb 2015. A merchant would feel comfortable to maintain a ledger on her own before accepting a payment by doing certain verification herself.

A node has a voting right. When a miner creates a block, and broadcasts the same to the network, hoping that it would be added in the blockchain and she would get the reward. The onus is on the nodes to decide whether it can be so done. If the majority nodes accept that to be a valid block only then it would be added.

How many nodes are there?

Each of the nodes would verify on its own that the transactions are all valid inside the block. For example, the money that a person wants to spend really belongs to her and that it is not already spent. And that the input value is higher than (the additional money goes to the miner as transaction fees) or equal to the output value etc.

There is one more thing that a node will check – PoW.

Proof-of-Work

Creation of a block, as we have already seen, takes no time. Well, that’s a problem. As mentioned earlier, at this moment, we have 500,000+ blocks in the Bitcoin blockchain. That is the Bitcoin history. If a block can be created very fast, an attacker can create the history in no time. If something can be changed so fast then that is anything but history.

We need to create the blockchain in a way so that it resembles a true history in the sense that it cannot be altered so easily.

Make Block Creation Difficult

To do that, it becomes imperative to add certain level of artificial difficulty to add a block in the blockchain ledger. If we do that, only then when we would see a state of blockchain that majority of the nodes have accepted as good, we would know for sure that it is impossible to change it or create a longer one in future. And only then we would have established a true trustless distributed system.

Nonce

We have seen that a miner would put some transactions in the block. It will combine them with timestamp, block number, hash of the previous block etc. But this, when goes as input into a hash function (say, SHA-256), would most likely not produce a certain number of zeros at the beginning of the output. PoW requires that, a miner concatenates a nonce (number used once) to that input such that the generated output contains a certain a number of zeros at the beginning.

Now that is very difficult. 508755th block that got added to blockchain a while ago computed such a hash that looks like below:

000000000000000000578f796a92e6f99c180e1084bced6a049b4be6d6764e74

And it used the nonce 1810240736 (combined with input) to come up with the above hash value or digest.

It takes around 10 minutes to try with various prospective nonce values by a powerful specialized computer (or a mining pool) to finally come up with the magic nonce. If the required number of zeros is increased by one, then finding such a hash output would take double the time, meaning the complexity is exponential in the number of zeros.

Adjust Difficulty

If 10 minutes is taken to create a block, it takes 2 weeks to create 2016 blocks. It is at this interval (2 weeks) that the Bitcoin protocol would adjust the complexity to ensure that on average it continues to take around 10 minutes to create a block. This complexity, called difficulty in Bitcoin protocol, needs to be calibrated periodically because the network is changing, mostly becoming more powerful or at times may be temporarily becoming a bit weaker.

So, we see that the whole Bitcoin ledger, called a blockchain, is a single chain of blocks, each of which took 10 minutes to create. Since each of them used the hash of its previous block, if an attacker wants to recreate history, all these blocks, half million+, have to be recreated sequentially. That would take 5+ years. By the time she creates all what is present right now, many more blocks would already be added at the end of the blockchain. And thus PoW ensures a virtually impossible to alter ledger that can be considered permanent.

Node Verifies PoW

When nodes choose a block, the protocol expects them to verify that the block has done the PoW. A node can check it by combining the nonce along with the block’s input and computing the hash. And then matching the computed hash with the hash specified in the block. They also need to check that it contains the required number of zeros at the beginning.

Chain With Greatest PoW effort Wins

Nodes also choose a block, that, when added to the chain, results in the longest blockchain. By longest blockchain, it means the one having the greatest PoW effort invested in it.

Genesis Block

As the name implies, blockchain contains all the transactions that happened since the beginning of the time for Bitcoin. The first block of the blockchain is called a genesis block – block 0.

Transaction Fee

Remember, earlier we spoke about a transaction fee. When I want to pay Bob some bitcoins, I create a transaction and send it to the network. It ends up in mempool (memory pool). A miner picks it up later and places it inside the block that she is creating. While doing so, a miner looks at the transactions in mempool that are offering it better fees. After all, there are many transactions to pick from. Then why not choose the ones offering the highest fees?

Transaction Processing Time

Hence, it is important to provide a good transaction fee, if I want my transaction to be confirmed/completed fast.

Even if I pay good fee, we know it has to get inside a block and block creation takes 10 minutes. But then, the usual safe practice in the network is to wait for 6 more blocks to be added after it, so that it is sufficiently buried inside good enough history.

So 1 hour should be a good estimate for transaction processing time, if I pay good transaction fee. If I pay no fee, well it may never go through.

Don’t Forget to Receive Change

A transaction must have input values higher than or equal to the output values as mentioned earlier. Otherwise the transaction is invalid for obvious reason. I also mentioned that I need to put my own address as an output so that the change comes back to me. What happens if I forget to do so and the change is very high? Well, the change (small or large) will end up with the miner! But how would that happen?

Coinbase or Generational Transaction

Well, as of now we have seen that a miner adds some transactions inside a block, does the PoW work and if that succeeds, broadcasts the block to the network for acceptance. If majority nodes accept then it gets added in the blockchain. We have also seen that each time such a block gets added to the blockchain, the miner gets some award in terms of bitcoins.

We also spoke about transaction fee – when a miner adds a transaction into a block, it gets the transaction fee as specified in that transaction.

It is the coinbase or Generational Transaction that adds this reward and cumulative transaction fees (for all the transactions included in the block), as the first transaction in the block. Miner’s address is specified there as the output.

For example, assume that a miner creates a block by including my transaction inside a block. Suppose no other transaction is added in that block. However, the miner would add the coinbase as the first transaction, where she would add 12.7 BTC as an output to herself. 12.5 BTC is the award for a block to be added in the blockchain and 0.2 BTC is the transaction fee for adding my transaction.

New Bitcoins Mined

My 0.2 BTC is from old supply that the miner gets. But the 12.5 BTC is a new supply of coins to the system. It is how new bitcoins are mined. In case you are not tracking bitcoin value, as I am writing this, 12.5 BTC is equivalent to almost $100K.

Future of Incentive for the Miners

However, the reward was not always 12.5 BTC. And it is not going to be the same in future as well. When Bitcoin started in 2009, miner would get 50 BTC for adding a block. At 10 minutes per block, it took take 4 years to create 210,000 blocks, generating 10 million+ BTC. Every 4 years, reward per block halves so that every 4 years bitcoin generation also halves. And this is how in 2140, it expected that all the 21 million+ BTC would be generated.

Much before that, reward will drastically reduce for adding a new block in blockchain. It is expected that many people (around 5 million in 2017) would own bitcoins by then and transaction volume would increase as well. And fees from those high number of transactions would be sufficient for the miners to keep on maintaining the bitcoin blockchain ledger.

Coins can be Lost Forever

As for transaction fee, what if the miner forgets to collect it, meaning what if she forgets to add it in the coinbase transaction, where she awards herself? Well, the coins will be lost forever. And it happened many times already.

Remember, input transactions are all spent when the new transaction is accepted, meaning they are all gone. New output transactions must be created equaling the input value. If not, and that can happen when the miner fails to collect the difference as fees, the difference is lost forever.

Incentive for Being Honest

As we have seen how the blockchain works, we find that to subvert the system, or defraud the system, one would need a lot of computing power. I mean, the attacker has no way to alter the history that would take years for her. What all she can do is to create a bad/invalid block and get many nodes of her own into the system. And get those nodes to vote for her bad block so that it gets added in the blockchain.

The question is, if somebody can create a block very fast and then can manage to get many nodes to vote for it, then why would she not create a good block and earn the rewards? The attacker would be better off by being an honest miner and be rewarded in the system. This economic incentive is built-in into Bitcoin protocol.

In a nutshell, you need a lot of computing power to be a bad guy. But if you indeed have a lot of computing power, you can as well become a good guy and get the rewards from the system.

You are Just a Number and Yet Very Secure

At this point, as we have a clearer picture of the Bitcoin payment system, we realize that I own a bitcoin is nothing more than a number (my private key that controls the spending of it) owns it. I am nothing. If somebody knows the number, she can go ahead and spend that coin.

At this moment, you might wonder why not then try to guess the private key? After all, all the public keys, owing all the bitcoins, are already known. All we have to do is take a public key and try to figure out the corresponding private key. If we can do that then the associated coin belongs to us.

But this is where cryptography comes into play. As mentioned already, Bitcoin uses Elliptic Curve Cryptography. There is another Public-key Cryptography system, called RSA. It also generates similar public/private key pairs and they are secure as well. Explaining in terms of RSA would be easier to understand as to why it is difficult to do so.

RSA is based on integer factorization. Suppose a number 55 is given. If we could find all the factors (5, 11) of it, we could break RSA. For this, we need to take each of the numbers from 2 to √55 and try to divide 55. Well, faster methods than this are also available. And yet it is very difficult. Why?

Because, the number we are dealing with here is very large. Even when we talk about 512-bit RSA, considered to be weak, we have to find two prime factors, each having around 155 decimal digits, from the product of them. 12 zeros after 1 make a trillion (3 zeros make a thousand, 6 zeros a million, 9 zeros a billion, and 12 zeros a trillion). Now we can imagine how big a number we are talking about. Factoring such a number is extremely difficult. It will take billions of years. Hence, given the public key, searching for the corresponding private key is like finding an ant in the universe.

You might as well think of creating an army of millions of public/private key pair a priori and keep on looking out for a transaction in blockchain that matches one of these public keys as output. If you found one, you can immediately use the corresponding private key to control the associated coin. But once again, the key space is so large that a properly generated key pair is unlikely to be generated by anyone else again.

Waste of Electricity

One thing that bothers people is the tremendous amount of electricity that is spent for generating the PoW. As we have realized by now, all the miners are trying to add their own blocks in the blockchain. But only one succeeds at a time. That way, all the work done by the rests are plain waste of energy (and hardware life). And it is not a small amount of energy.

We are doing PoW because we don’t want to have a single authority that we have to trust. It is achieving the trustless consensus in a distributed system that we are spending this energy for.

Future

Security and Viability

One might fear that quantum computing might be the end of public-key cryptography. Well, integer factorization problem can be solved very fast using full-scale quantum computer. That will also break elliptic curve algorithms as well. Research is ongoing for quantum resistant cryptographic system by utilizing other areas of mathematics.

Even if we forget about quantum computing, it is important to note that, nobody ever said that there would not be any faster algorithm for, say, integer factorization. And the same applies for elliptic curve.

Such a day, when it will be found, will create many other bigger problems for sure. But cryptocurrency would be an instant direct victim.

On Limited Supply

As for limited supply of 21 million BTC, well, that is an artificial limitation. In terms of math and technology there is no restriction. So the limited supply of Bitcoin or any other cryptocurrency for that matter is set by the creator of the respective coin. I mean, it is not really like diamond or gold. Artificial scarcity does not mean it is truly rare in nature.

For example, we already discussed about a future when no bitcoin can be mined anymore. In that future, it is the transaction fee that is expected to incentivize the miners to maintain blockchain. But whether that is going to be economically viable for all – miners and spender – is not quite clear now. Transaction fee shots up time to time.

We should also remember that 80% bitcoins are already mined. The rest 20% will be mined in the next 120 years. So in a sense, all the supply is already here.

Is the transaction volume good enough right now? Will Bitcoin decide to increase the supply in future, in case miners stop working? Will the bitcoin owners agree to pay high enough transaction fee?

As for limited supply, one more thing we need to remember – there are 1000+ cryptocurrencies out there. Well, you can also start your own cryptocurrency. As long sufficient people have faith in your currency, they buy it and transact in it, all is well.

Index