Year 2019

Jul

4th Jul 2019 (110th) – Detecting the Start Node of a Loop in a Singly Linked List, if Exists

Jun

27th Jun 2019 (109th) – Building the Highest Stack of Cuboids of Varrying Length, Height, and Width Without Rotating in Non-Increasing Order From Bottom to Top

20th Jun 2019 (108th) – Detecting Poison in 1 Out of 2n bottles Using n Strips That Changes Color Only When Poison is Poured On it

13th Jun 2019 (107th) – Searching in a Sorted and Rotated Array

6th Jun 2019 (106th) – Delete a Node from BST

May

30th May 2019 (105th) – Retrieving a Random Item from BST

23rd May 2019 (104th) – Optimizing SQL Queries Using Join Hints

16th May 2019 (103rd) – Largest Rectangular Area in a Histogram at O(n)

9th May 2019 (102nd) – Efficient Construction of Hierarchical Dropdown for UI

2nd May 2019 (101st) – Hierarchical and Recursive Queries in T-SQL

Apr

25th Apr 2019 (100th) – Segment Tree (Query and Update)

18th Apr 2019 (99th) – Segment Tree (Building)

11th Apr 2019 (98th) – Maximum Rectangular Area in Histogram (Divide and Conquer)

4th Apr 2019 (97th) – Trapping Rain Water

Mar

28th Mar 2019 (96th) – Dutch National Flag Problem

21st Mar 2019 (95th) – Permutation

14th Mar 2019 (94th) – Post-order sequence to binary tree, check if BST

7th Mar 2019 (93rd) – Pre-order sequence to binary tree, check if BST

Feb

28th Feb 2019 (92nd) – Task Dependencies, Build Execution Order, if Any (using DFS)

21st Feb 2019 – Missed, On Leave

14th Feb 2019 (91st) – Build BST in an Efficient Way to Count Height of Each Node (Contd.)

7th Feb 2019 (90th) – Build BST in an Efficient Way to Count Height of Each Node (Contd.)

Jan

31st Jan 2019 (89th) – Build BST in an Efficient Way to Count Height of Each Node (Contd.)

25th Jan 2019 (88th) – Build BST in an Efficient Way to Count Height of Each Node

17th Jan 2019 (87th) – Common First Ancestor of Two Nodes in a Binary Tree with All Unique Values

10th Jan 2019 (86th) – Check if a Binary Tree is Balanced

3rd Jan 2019 (85th) – Number of Paths with a Certain Sum in a Binary Tree (Top-down)

Year 2018

Dec

27th Dec 2018 (84th) – Number of Subsequences Making a Certain Sum In an Array

20th Dec 2018 (83rd) – Number of Paths with a Certain Sum in a Binary Tree (Bottom-up)

13th Dec 2018 (82nd) – Binary Tree to Doubly Linked List

5th Dec 2018 (81st) – Given a BST, Find All Input Sets That Can Build it

Nov

28th Nov 2018 (80th) – Number of ways a BST can be built with n distinct keys

21st Nov 2018 (79th) – Merge Two Lists in All Possible Ways Preserving Relative Order of Elements Within Each List

14th Nov 2018 (78th) – Two-way/Bidirectional Search BFS

7th Nov 2018 (77th) – Detecting Cycle in a Directed Graph

Oct

31st Oct 2018 (76th) – Drawing with HTML5 <canvas> and JavaScript, Rotation of Axes, Arrow Drawing

24th Oct 2018 (75th) – CA, ICA, Chain of Trust

17th Oct 2018 (74th) – How does SSL/TLS work

10th Oct 2018 (73rd) – Self-signed SAN Certificate for localhost Using OpenSSL

3rd Oct 2018 (72nd) – Gradient Descent

Sep

26th Sep 2018 (71th) – Simple Linear Regression Using Gradient Descent

19th Sep 2018 (70th) – Simple Linear Regression Using Linear Least Squares

12th Sep 2018 (69th) – Multiple Linear Regression Demo Using R

5th Sep 2018 (68th) – Cycle Detection Using Union by Rank and Path Compression in an Undirected Graph

Aug

29th Aug 2018 (67th) – Union by Rank and Path Compression

22nd Aug 2018 – Missed, Public Holiday

15th Aug 2018 (66th) – Stable Roommates Problem (continued)

8st Aug 2018 (65th) – Stable Roommates Problem (continued)

1st Aug 2018 (64th) – Stable Roommates Problem

Jul

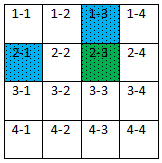

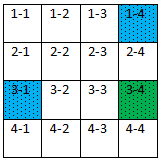

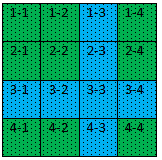

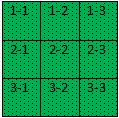

25th Jul 2018 (63rd) – 2-d Array Printing in Spiral order

18th Jul 2018 (62nd) – Stable Marriage Problem (continued)

11th Jul 2018 (61st) – Stable Marriage Problem

4th Jul 2018 (60th) – DFS

Jun

27th Jun 2018 (59th) – BFS

20th Jun 2018 – Cancelled

13th Jun 2018 (58th) – Ford-Fulkerson Method (Max-flow)

6th Jun 2018 – Missed, On Leave

May

30th May 2018 – Missed, On Leave

23rd May 2018 (57th) – Karger’s Algorithm (Minimum cut)

16th May 2018 (56th) – Solution – Currency Arbitrage with Increasing Rate

11th May 2018 – Cancelled

4th May 2018 – Cancelled

Apr

27th Apr 2018 – Cancelled

20th Apr 2018 – Cancelled

13rd Apr 2018 – Cancelled

6th Apr 2018 – Cancelled

Mar

30th Mar 2018 – Missed, Public Holiday

23rd Mar 2018 (55th) – Bitcoin – Simple Payment Verification (SPV)

16th Mar 2018 (54th) – Cryptographic Hash Function – Properties

9th Mar 2018 (53rd) – Collation in MS SQL Server

2nd Mar 2018 (52nd) – Solution – Currency Arbitrage with Decreasing Rate

Feb

23rd Feb 2018 (51st) – Merkle Tree

16th Feb 2018 – Missed, Chinese New Year

9th Feb 2018 (50th) – RSA

2nd Feb 2018 (49th) – Solution – Currency Arbitrage

Jan

26th Jan 2018 (48th) – Overview of Bitcoin and Blockchain

19th Jan 2018 (47th) – Johnson’s Algorithm

12th Jan 2018 (46th) – Dijkstra’s Problem with Negative Edge

5th Jan 2018 (45th) – Solution – Sprint Completion Time

Year 2017

Dec

29th Dec 2017 – Missed, On Leave

22nd Dec 2017 – Missed, On Leave

15th Dec 2017 (44th) – Rod Cutting Problem

8th Dec 2017 (43rd) – Task Scheduling – Unlimited Server

1st Dec 2017 (42nd) – Solution – RC Election Result

Nov

24th Nov 2017 (41st) – Traveling Salesman Problem (Brute force and Bellman–Held–Karp)

17th Nov 2017 (40th) – Hamiltonian Path

10th Nov 2017 (39th) – Coin Exchange – Min Number of Coins

3rd Nov 2017 (38th) – Solution – Choosing Oranges

Oct

27th Oct 2017 – Missed, On Leave

20th Oct 2017 (37th) – Coin Exchange – Number of Ways

13th Oct 2017 – Missed, JLT D&D

6th Oct 2017 (36th) – Solution – Team Lunch

Sep

29th Sep 2017 (35th) – Floyd-Warshall Algorithm

22nd Sep 2017 (34th) – Executing SP Using EF; Transaction in Nested SP

15th Sep 2017 (33rd) – Solution – FaaS; Pseudo-polynomial Complexity

8th Sep 2017 – Missed, JLT Family Day

1st Sep 2017 – Missed, Hari Raya

Aug

25th Aug 2017 (32nd) – Multithreaded Programming

18th Aug 2017 (31st) – Knapsack Problem

11th Aug 2017 (30th) – Vertex Coloring

4th Aug 2017 (29th) – Solution – Scoring Weight Loss

Jul

28th Jul 2017 (28th) – Minimum Spanning Tree – Kruskal and Prim

21st Jul 2017 (27th) – Pseudorandom Number Generator

14th Jul 2017 (26th) – Rete Algorithm

7th Jul 2017 (25th) – Solution – Manipulating Money Exchange

Jun

30th Jun 2017 (24th) – Rules Engine

23rd Jun 2017 (23rd) – Inducting Classification Tree

16th Jun 2017 (22nd) – Incision into Isolation Level; Interpreting IIS Internals; Synchronizing Web System

9th Jun 2017 (21st) – Maximum Subarray Problem

2nd Jun 2017 (20th) – Solution – Making Money at Stock Market

May

26th May 2017 (19th) – Understanding Correlation Coefficient; k-NN Using R

19th May 2017 (18th) – k-d Tree and Nearest Neighbour Search

12th May 2017 (17th) – Bellman Ford Algorithm

5th May 2017 (16th) – Solution – Company Tour 2017 to Noland

Apr

28th Apr 2017 (15th) – Models in Machine Learning; k-Nearest Neighbors (k-NN)

21st Apr 2017 (14th) – Edit/Levenshtein Distance

14th Apr 2017 – Missed, Good Friday

7th Apr 2017 (13th) – Solution – No Two Team Member Next to Each Other

Mar

31st Mar 2017 (12th) – N-queens

24th Mar 2017 (11th) – Longest Common Subsequence (LCS)

17th Mar 2017 (10th) – Dijkstra’s Algorithm

10th Mar 2017 (9th) – Infix, Prefix (Polish), Postfix (Reverse Polish)

3rd Mar 2017 (8th) – Order 2-D Array in all Directions & Find all Triplets with Sum Zero in an Array

Feb

24th Feb 2017 (7th) – Trailing Zeros in a Factorial

17th Feb 2017 (6th) – Is this Tree a BST?

10th Feb 2017 (5th) – Given a Number, Find the Smallest Next Palindrome

3rd Feb 2017 (4th) – Merge n Sorted Lists, Each Having m Numbers, Into a Sorted List

Jan

27th Jan 2017 – Missed, Chinese New Year Eve

20th Jan 2017 (3rd) – Shortest Exit from Maze

13rd Jan 2017 (2nd) – Finding Fibonacci – Exponential vs. Linear

6th Jan 2017 (1st) – Gmail API with OAuth 2.0

Other Posts

Problems in JLTi Code Jam